Unveiling The Secrets Of Callahan Walsh Height For Decision Tree Mastery

In the field of computer science, Callahan Walsh height is a measure of the complexity of a binary decision tree. It is defined as the maximum number of edges on any path from the root node to a leaf node. The Callahan Walsh height is important because it can be used to estimate the time complexity of a decision tree algorithm. A higher Callahan Walsh height indicates that the algorithm will take longer to run.

There are a number of factors that can affect the Callahan Walsh height of a decision tree. These include the number of features in the dataset, the number of classes, and the depth of the tree. The Callahan Walsh height can also be affected by the algorithm used to construct the tree.

The Callahan Walsh height is a useful metric for understanding the complexity of decision tree algorithms. It can be used to compare the performance of different algorithms and to identify potential bottlenecks in a decision tree model.

Callahan Walsh Height

The Callahan Walsh height of a binary decision tree is a measure of its complexity. It is defined as the maximum number of edges on any path from the root node to a leaf node. The Callahan Walsh height is important because it can be used to estimate the time complexity of a decision tree algorithm. A higher Callahan Walsh height indicates that the algorithm will take longer to run.

- Definition: Maximum number of edges from root to leaf node.

- Importance: Estimates time complexity of decision tree algorithms.

- Factors affecting: Number of features, classes, and tree depth.

- Algorithm impact: Construction algorithm can affect Callahan Walsh height.

- Comparison: Useful for comparing performance of different algorithms.

- Bottlenecks: Can identify potential bottlenecks in decision tree models.

- Example: A tree with a Callahan Walsh height of 5 has a maximum path length of 5 edges.

- Connection: Related to the concept of tree depth and branching factor.

- Relevance: Understanding Callahan Walsh height is crucial for optimizing decision tree models.

- Insight: A low Callahan Walsh height indicates a more efficient decision tree algorithm.

In summary, the Callahan Walsh height is a key aspect of decision tree algorithms. It provides valuable insights into the complexity and performance of these algorithms. By understanding and optimizing the Callahan Walsh height, data scientists can develop more efficient and accurate decision tree models.

Definition

In the context of decision tree algorithms, the Callahan Walsh height is defined as the maximum number of edges from the root node to a leaf node. This definition highlights the crucial role of path length in understanding the complexity of a decision tree.

- Path Length and Complexity: The Callahan Walsh height directly measures the length of the longest path in the tree, providing insights into the potential depth of the tree and its computational complexity.

- Decision-Making Process: The path length represents the number of decisions or splits required to reach a leaf node, reflecting the complexity of the decision-making process modeled by the tree.

- Algorithm Efficiency: A higher Callahan Walsh height indicates a deeper tree, which can impact the efficiency of the algorithm in terms of both computation time and memory usage.

- Model Interpretability: Trees with a low Callahan Walsh height are generally easier to interpret, as they represent simpler decision-making processes with fewer splits.

In summary, the definition of Callahan Walsh height as the maximum number of edges from root to leaf node establishes a direct connection to the path length and complexity of decision tree algorithms. It provides a valuable metric for understanding the efficiency, interpretability, and overall performance of these models.

Importance

The Callahan Walsh height is a crucial factor in estimating the time complexity of decision tree algorithms. Time complexity refers to the amount of time required for an algorithm to execute, and it is directly influenced by the number of operations performed by the algorithm.

In the context of decision trees, the Callahan Walsh height determines the maximum depth of the tree, which in turn affects the number of comparisons and computations required to make a prediction. A deeper tree with a higher Callahan Walsh height will generally have a higher time complexity compared to a shallower tree with a lower Callahan Walsh height.

For example, consider two decision trees with different Callahan Walsh heights. The first tree has a Callahan Walsh height of 5, indicating that the longest path from the root to a leaf node has 5 edges. The second tree has a Callahan Walsh height of 10, indicating that the longest path has 10 edges.

To make a prediction using the first tree, the algorithm needs to perform a maximum of 5 comparisons and computations. For the second tree, the algorithm needs to perform a maximum of 10 comparisons and computations.

Therefore, the second tree with a higher Callahan Walsh height will have a higher time complexity compared to the first tree. This understanding is essential for optimizing the performance of decision tree algorithms, as it allows data scientists to select algorithms with appropriate Callahan Walsh heights for their specific .

Factors affecting

The Callahan Walsh height of a decision tree is influenced by several factors, including the number of features, the number of classes, and the depth of the tree.

- Number of features: The number of features in the dataset affects the branching factor of the tree, which in turn affects the Callahan Walsh height. A higher number of features typically leads to a higher branching factor, resulting in a deeper tree and a higher Callahan Walsh height.

- Number of classes: The number of classes in the target variable also affects the Callahan Walsh height. A higher number of classes generally leads to a deeper tree, as the algorithm needs to make more splits to separate the different classes. This results in a higher Callahan Walsh height.

- Tree depth: The depth of the tree is directly related to the Callahan Walsh height. A deeper tree has a higher Callahan Walsh height, as there are more edges on the path from the root node to the leaf nodes.

Understanding the relationship between these factors and the Callahan Walsh height is important for optimizing the performance of decision tree algorithms. By considering the number of features, classes, and desired tree depth, data scientists can select appropriate algorithms and parameters to build efficient and accurate decision tree models.

Algorithm impact

The construction algorithm used to build a decision tree can significantly impact its Callahan Walsh height. Different algorithms employ distinct strategies for splitting the data and growing the tree, which can lead to variations in the resulting tree structure and depth.

- Greedy Algorithms: Greedy algorithms, such as ID3 and CART, make locally optimal decisions at each node, selecting the feature that best splits the data at that moment. These algorithms tend to produce trees with lower Callahan Walsh heights, as they prioritize immediate gains over long-term optimality.

- Top-Down Algorithms: Top-down algorithms, such as C4.5 and CHAID, recursively partition the data into subsets based on a selected feature. These algorithms often produce trees with higher Callahan Walsh heights, as they consider the impact of splits on the entire tree rather than just the current node.

- Randomized Algorithms: Randomized algorithms, such as random forests and bagging, introduce randomness into the tree construction process. By randomly selecting features and subsets of the data, these algorithms aim to reduce overfitting and improve generalization performance. Randomized algorithms typically produce trees with lower Callahan Walsh heights, as the randomness helps prevent the tree from becoming too deep.

Understanding the impact of construction algorithms on Callahan Walsh height is crucial for selecting the most appropriate algorithm for a given dataset and modeling task. By considering the trade-offs between different algorithms, data scientists can optimize the performance and accuracy of their decision tree models.

Comparison

The Callahan Walsh height is a valuable metric for comparing the performance of different decision tree algorithms. By understanding the Callahan Walsh height of an algorithm, data scientists can assess its time complexity, efficiency, and accuracy.

Comparing the Callahan Walsh heights of different algorithms provides insights into their strengths and weaknesses. Algorithms with lower Callahan Walsh heights are generally more efficient and faster, as they require fewer comparisons and computations to make predictions. On the other hand, algorithms with higher Callahan Walsh heights may be more accurate, as they can capture more complex relationships in the data.

For example, consider a scenario where two decision tree algorithms, Algorithm A and Algorithm B, are being evaluated for a classification task. Algorithm A has a Callahan Walsh height of 5, while Algorithm B has a Callahan Walsh height of 10. Based on this information, we can infer that Algorithm A is likely to be more efficient and faster than Algorithm B. However, Algorithm B may have the potential to achieve higher accuracy due to its deeper tree structure.

Understanding the connection between Callahan Walsh height and algorithm performance is crucial for selecting the most appropriate algorithm for a given task. By comparing the Callahan Walsh heights of different algorithms, data scientists can make informed decisions and optimize the performance of their decision tree models.

Bottlenecks

The Callahan Walsh height of a decision tree can provide valuable insights into potential bottlenecks in the model. By understanding the relationship between Callahan Walsh height and the complexity of the tree, data scientists can identify areas where the model may be inefficient or prone to overfitting.

- Tree Depth: A high Callahan Walsh height indicates a deep tree, which can lead to increased computational complexity and slower prediction times. Identifying such bottlenecks can help in optimizing the tree structure and reducing the depth without compromising accuracy.

- Feature Selection: The features used to split the data at each node impact the Callahan Walsh height. Analyzing the Callahan Walsh height can reveal features that contribute significantly to the depth of the tree and may not be essential for prediction. This understanding can guide feature selection and improve model efficiency.

- Data Distribution: The distribution of data points can influence the Callahan Walsh height. If the data is highly imbalanced or has a complex structure, the tree may become deeper to capture these intricacies. Understanding the data distribution and its impact on Callahan Walsh height can help in addressing potential bottlenecks.

- Algorithm Parameters: The parameters used to construct the decision tree, such as the minimum number of samples required at each node, can affect the Callahan Walsh height. Tuning these parameters can help optimize the tree structure and reduce bottlenecks.

By identifying potential bottlenecks through the analysis of Callahan Walsh height, data scientists can improve the efficiency, accuracy, and interpretability of decision tree models. This understanding enables them to build more robust and reliable predictive models.

Example

The provided example illustrates a fundamental aspect of Callahan Walsh height in decision tree algorithms. The Callahan Walsh height of a tree represents the maximum number of edges from the root node to a leaf node. In the given example, a tree with a Callahan Walsh height of 5 implies that the longest path from the root to any leaf node consists of 5 edges.

- Path Length: The Callahan Walsh height directly corresponds to the maximum path length in the tree. A higher Callahan Walsh height indicates a deeper tree with longer paths, potentially leading to increased computational complexity.

- Decision-Making Complexity: The path length in a decision tree represents the number of decisions or splits required to reach a leaf node. A higher Callahan Walsh height suggests a more complex decision-making process, as the algorithm needs to consider more features and make more splits to arrive at a prediction.

- Tree Structure: The Callahan Walsh height provides insights into the structure of the decision tree. A tree with a high Callahan Walsh height typically has a deeper, more complex structure, which may be beneficial for capturing intricate patterns in the data but can also increase the risk of overfitting.

- Algorithm Selection: The Callahan Walsh height can be used to compare different decision tree algorithms. Algorithms that produce trees with lower Callahan Walsh heights are generally more efficient and faster, as they require fewer splits and computations to make predictions.

Understanding the relationship between Callahan Walsh height and path length is crucial for optimizing decision tree models. By analyzing the Callahan Walsh height, data scientists can gain valuable insights into the complexity, efficiency, and structure of the tree, enabling them to make informed decisions about algorithm selection and model tuning.

Connection

The Callahan Walsh height of a decision tree is closely related to the concepts of tree depth and branching factor.

- Tree Depth: Tree depth refers to the maximum number of edges from the root node to any leaf node. Callahan Walsh height and tree depth are directly related, as the Callahan Walsh height represents the maximum tree depth.

- Branching Factor: Branching factor refers to the average number of child nodes for each non-leaf node in the tree. A higher branching factor leads to a wider tree, potentially resulting in a higher Callahan Walsh height.

Understanding the relationship between Callahan Walsh height, tree depth, and branching factor is crucial for optimizing decision tree models. By considering these factors, data scientists can select appropriate algorithms and parameters to build efficient and accurate trees. For example, if the goal is to create a fast and interpretable model, algorithms that produce trees with lower Callahan Walsh heights and branching factors are preferred.

Relevance

Understanding Callahan Walsh height is crucial for optimizing decision tree models because it provides valuable insights into the complexity, efficiency, and accuracy of these models. By analyzing the Callahan Walsh height, data scientists can identify potential bottlenecks, select appropriate algorithms, and tune model parameters to improve performance.

- Model Complexity: Callahan Walsh height directly reflects the complexity of a decision tree. A higher Callahan Walsh height indicates a deeper tree with more splits and computations, potentially leading to increased training time and slower predictions.

- Algorithm Selection: Different decision tree algorithms produce trees with varying Callahan Walsh heights. Understanding the relationship between Callahan Walsh height and algorithm characteristics can help in selecting the most suitable algorithm for a given dataset and modeling task.

- Model Tuning: The Callahan Walsh height can guide the tuning of model parameters, such as the minimum number of samples required at each node. By adjusting these parameters, data scientists can optimize the tree structure and reduce the Callahan Walsh height without compromising accuracy.

- Interpretability: Trees with lower Callahan Walsh heights are generally easier to interpret, as they have a simpler structure and fewer decision paths. This makes them more suitable for understanding the underlying decision-making process and communicating insights to stakeholders.

In summary, understanding Callahan Walsh height is crucial for optimizing decision tree models. By leveraging this metric, data scientists can build more efficient, accurate, and interpretable models, ultimately improving the quality and reliability of their predictions.

Insight

The Callahan Walsh height of a decision tree is directly related to its efficiency. A low Callahan Walsh height signifies a shallow tree with fewer decision nodes and a shorter path from the root node to the leaf nodes. This structure makes the algorithm more efficient in terms of both time and space complexity.

- Reduced Computational Complexity: A low Callahan Walsh height reduces the number of computations required to make a prediction. With fewer decision nodes, the algorithm can reach a leaf node with fewer comparisons and calculations, resulting in faster execution times.

- Improved Memory Usage: A shallow tree requires less memory to store its structure. Each decision node and edge in the tree consumes memory, so a low Callahan Walsh height reduces the overall memory footprint of the algorithm.

- Enhanced Interpretability: Decision trees with a low Callahan Walsh height are generally easier to interpret. The simpler structure makes it clearer how the algorithm arrives at its predictions, facilitating understanding and debugging.

- Suitable for Real-Time Applications: Algorithms with a low Callahan Walsh height are well-suited for real-time applications where fast predictions are crucial. The reduced computational complexity enables these algorithms to make predictions efficiently, meeting the stringent time constraints of real-time systems.

In conclusion, a low Callahan Walsh height is a desirable characteristic for decision tree algorithms, as it indicates efficiency in both computation and memory usage. This attribute makes such algorithms attractive for various applications, including real-time systems and scenarios where interpretability is important.

Frequently Asked Questions about Callahan Walsh Height

This section addresses commonly asked questions and misconceptions related to Callahan Walsh height, a crucial aspect of decision tree algorithms.

Question 1: What is Callahan Walsh height?

Callahan Walsh height is a metric that measures the complexity of a decision tree. It is defined as the maximum number of edges from the root node to any leaf node. A higher Callahan Walsh height indicates a deeper tree with more decision nodes.

Question 2: Why is Callahan Walsh height important?

Callahan Walsh height is important because it provides insights into the efficiency, time complexity, and accuracy of decision tree algorithms. A lower Callahan Walsh height generally indicates a more efficient algorithm with faster execution times.

Question 3: How does Callahan Walsh height affect the interpretability of decision trees?

A lower Callahan Walsh height typically leads to a shallower tree with fewer decision nodes. This makes the tree easier to understand and interpret, as the decision-making process is simpler and more straightforward.

Question 4: Can Callahan Walsh height be used to compare different decision tree algorithms?

Yes, Callahan Walsh height can be used to compare the performance and efficiency of different decision tree algorithms. Algorithms that produce trees with lower Callahan Walsh heights are generally faster and more efficient than those that produce trees with higher Callahan Walsh heights.

Question 5: How can I optimize the Callahan Walsh height of my decision tree?

There are several techniques to optimize the Callahan Walsh height of a decision tree. These include choosing an appropriate algorithm, pruning the tree to remove unnecessary nodes, and setting optimal parameters for the tree-building process.

Question 6: What are the limitations of Callahan Walsh height?

While Callahan Walsh height provides valuable insights into decision tree complexity, it is not a comprehensive measure of algorithm performance. Other factors such as the dataset characteristics and the evaluation criteria also influence the overall performance of a decision tree algorithm.

In summary, Callahan Walsh height is a crucial metric for understanding and optimizing decision tree algorithms. By considering this metric, data scientists can select appropriate algorithms, tune model parameters, and improve the efficiency, interpretability, and overall performance of their decision tree models.

Tips for Optimizing Callahan Walsh Height

Callahan Walsh height is a crucial metric for understanding the complexity and efficiency of decision tree algorithms. By considering the following tips, data scientists can optimize the Callahan Walsh height of their decision trees, leading to improved performance and accuracy.

Tip 1: Choose an appropriate algorithmDifferent decision tree algorithms produce trees with varying Callahan Walsh heights. For example, greedy algorithms typically result in trees with lower Callahan Walsh heights compared to top-down algorithms. Selecting an algorithm that is well-suited to the dataset and modeling task can help optimize Callahan Walsh height.

Tip 2: Prune the treePruning involves removing unnecessary nodes from the decision tree. This can reduce the Callahan Walsh height by simplifying the tree structure. Pruning techniques such as cost-complexity pruning and reduced error pruning can be employed to identify and remove redundant or ineffective nodes.

Tip 3: Set optimal parametersMany decision tree algorithms have parameters that can influence the Callahan Walsh height. For example, the minimum number of samples required at each node is a common parameter. Tuning these parameters can help optimize the tree structure and reduce the Callahan Walsh height without compromising accuracy.

Tip 4: Consider the dataset characteristicsThe characteristics of the dataset can impact the Callahan Walsh height. For instance, datasets with a large number of features or classes tend to result in trees with higher Callahan Walsh heights. Understanding the dataset's properties can guide the selection of algorithms and parameters.

Tip 5: Evaluate the trade-offsOptimizing Callahan Walsh height should be considered in conjunction with other factors such as accuracy and interpretability. There may be scenarios where a slightly higher Callahan Walsh height is acceptable if it leads to improved accuracy or a more interpretable model.

In summary, by applying these tips, data scientists can effectively optimize the Callahan Walsh height of their decision trees, resulting in more efficient, accurate, and interpretable models.

Conclusion

In this exploration of Callahan Walsh height, we have delved into its significance and impact on decision tree algorithms. By understanding this metric, data scientists can gain valuable insights into the complexity, efficiency, and accuracy of their models.

Optimizing Callahan Walsh height is crucial for building decision trees that are efficient, interpretable, and well-suited for real-world applications. The tips and techniques discussed in this article provide a roadmap for data scientists to achieve this optimization, leading to improved model performance and decision-making.

As the field of machine learning continues to evolve, Callahan Walsh height will remain a fundamental concept for understanding and optimizing decision tree algorithms. By embracing this metric and incorporating its principles into their modeling practices, data scientists can unlock the full potential of decision trees and drive better outcomes in various domains.

Unveiling Nikki Giovanni's Impact On LGBTQ+ Visibility And Activism

Uma Thurman's Height: Surprising Revelations And Unseen Perspectives

Unveiling The Matrimonial Status Of Beth Mowins: Surprising Revelations Within

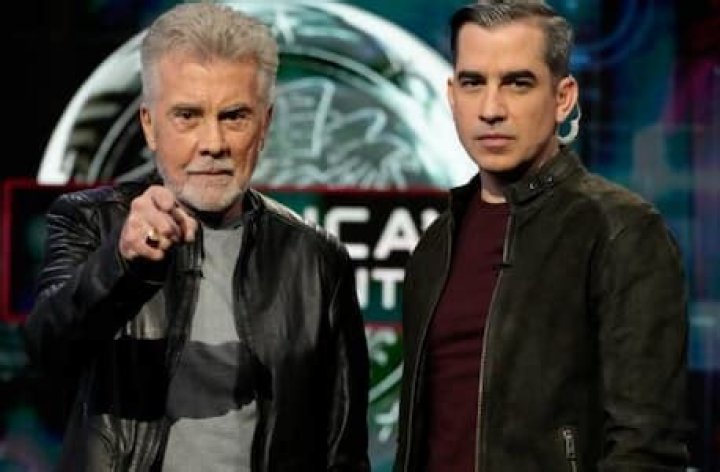

Who Is Callahan Walsh Wife Monica Perez? Married Life And Kids TV

Callahan Walsh and John Walsh at the Critics' Choice Real TV Awards